Software:

Unreal Engine 4.24 | Git for Windows | Git LFS | GitHub Desktop

What is this all about?

A game development project is in fact a software development project, and therefore requires Source Control (aka “Version Control”).

A Source Control solution is a software system that registers and stores states of the code as it develops, and allows the developers to manage the changes, compare different versions of the project, revert to previous versions and much more.

There are more than one popular Source Control solutions in the market.

This article is written specifically about setting-up Source Control for an Unreal Engine 4 project using Git Source Control software, focusing on working with the GitHub desktop client app for Windows.

Disclaimer:

Although I’ve already had some experience using Git Source Control,

This is the first time I’ve had to set it up for myself by myself from scratch specifically for UE4 (using Git LFS), So this article can’t be regarded as an authoritative guide to the subject.

This is simply an informative record of the steps taken, problems encountered, notes, etc.

Hopefully, this post will be helpful, and if you find mistakes, inaccuracies, or that I forgot some steps, I’ll be grateful if you’ll post a comment.

General Preparations:

- Create a GitHub account:

github.com

Note:

The installations of the Git client tools in the next steps will require your GitHub login credentials (I don’t remember exactly which and when, because I didn’t document every step being busy getting things to work…)

- Install Git for Windows:

gitforwindows.org

Note:

With the installation of Git for windows we not only get the Git Client software, responsible for performing all the Source Control operations, but also Git Bash which is (in my opinion) a very convenient command-line console specialized for Git operations.

- Install Git LFS:

git-lfs.github.com

Note:

The Git LFS client is responsible for compressing and uploading the large binary files, which is to simply say, the files that are not ascii text format like software source code files / scripts / meta-data / settings etc., but typically media files like 3D models, graphic files, audio and the like.

We generally don’t have to do anything directly with the Git LFS client, The Git Client automatically runs it, and it operates according settings written in text files placed at the Git repository root folder (more detail on this below).

- Optional: Install GitHub Desktop:

desktop.github.com

Note:

This is an optional desktop Git client with a GUI for performing git operations.

You can do everything without it, but its convenient, and was specifically helpful for me with setting up a UE4 repository, because it provides preset Git LFS settings (more on that below).

Steps for setting up a UE4 project GitHub repository:

Note:

I’m using GitHub Desktop to initialize the repository with UE4 Git LFS settings and also

- Create an Unreal Engine 4 project (if you haven’t already created one..)

Note:

If you want the 3D assets, texture files, etc. to be tracked and version controlled as an integral part of the UE4 project, add a “Raw_Content” or “Raw_Assets” folder inside the UE4 project folder to store them in their editable formats prior to being imported to the Unreal project.

Uploading such large binary files to git requires using Git LFS as described below.

- Important: Backup a full copy of the UE4 project somewhere safe at least for the first steps.

Note:

I Actually needed to use this backup to save my own project (see below)

- In GitHub Desktop, choose File > New repository to open the Create a new repository dialog.

- A. In the Name field Type the exact name of the UE4 project (The name of it’s root folder).

B. Write a short description of your project in the Description field.

C. Set the Local path to the folder containing the your project folder, not the project folder itself.

D. In the Git ignore drop-down select the UnrealEngine Option.

E. Press the Create repository button.

Note:

Stage D is very important, it creates an initial .gitignore file with specific settings for a UE4 project, namely what files and folders to track and upload. a UE4 project generates massive files that are needed to run the game but are redundant, because they can always be generated again. these files shouldn’t be tracked or uploaded, and without proper settings in the .gitignore file, you wont be able to push (upload) the repository to the remote server.

This is one of GitHub Desktop’s advantages, that it offers these Git Ignore presets.

Another Note:

We could “Publish” the repository to to GitHub server at this stage (see explanation below).

But the reason I don’t recommend performing this action at this stage is that for a UE4 project to be successfully uploaded (“pushed”) to the remote server, we must have proper Git LFS settings, which we haven’t finished to set up prior to that.

Blender Note:

If you work with Blender and it’s setup to save the *.blend1 backup files, it’s recommended to add this type of file to the Git Ignore file like this:

Publishing the new repository to the GitHub server:

Note:

This step includes initiating the remote repository on the GitHub server, setting it as the local repository’s origin, verifying it and pushing (uploading) the current state of the local repository to the remote one.

GitHub desktop let’s us perform all these actions at ones with it’s Publish repository button.

If you wish to know how to perform these operations without using GitHub desktop, this article gives detailed explanations.

Steps for publishing the new repository with GitHub desktop:

- Commit the recent changes (Git LFS settings we just set):

In Github desktop (assuming the repository we just initiated is selected in the Current repository drop-down), in the Changes pane, observe the list of latest changes to the repository. it should include the changed .gitattributes file, and maybe more changes.

In the Description field, type a short description of the changes like “Updated Git LFS settings” for example, and press the Commit to master button to commit the updated state of the repository to the source control history.

- Publish the repository:

The next actions will both initiate a remote repository on the GitHub server, set it up as the origin of the local repository, and push (upload) the the local repository to the server.

In GitHub desktop, make sure the new repository is selected in the Current repository drop-down and press the Publish repository button to open the Publish repository dialog.

- In the Publish repository dialog, name the remote repository (AFAIK it doesn’t have to be the exact name as your local repository’s root folder’s name, but it’s convenient if it is..), Type a description for the project, check weather it should be public or private and press the Publish repository button.

The repository’s remote origin will now be initiated, and the local repository’s state and commit history will be pushed (uploaded) to the server to update the origin.

* Depending on the size of your local repository, this may take some time…

- Once the process of publishing the new repository has finished, we can browse the new remote repository on GitHub:

Regularly committing and pushing updated state of the project/repository:

Note:

You might want to commit an updated state of your project to source control at the end of a day’s work to back it up, or when some specific development goal has been reached, or prior to some significant change, or maybe in-order for other team members/users to be able to get the latest version of the project. there could be many reasons for committing the current state of the project to Git, but the most important reason is that you want to be able to restore the current state of the project In the future.

Steps for committing the updated state of the repository to Git and pushing it to the remote server:

- Commit the changes to Git:

- In Github desktop select the repository of your project in the Current repository drop-down, observe the list of latest changes to the repository in the Changes pane, where you can highlight a changed file and see on the right the actual change in code it represents. un-check changes you don’t want to commit.

In the Description field, type a short description of update, and press the Commit to master button to commit the updated state of the repository to the source control history.

- After the latest changes have been committed, you’l see the new committed state appear in the History pane, with an upwards arrow icon indicating it hasn’t been pushed to the server yet.

Highlight the top committed state and press the Push origin button to update the remote repository on the GitHub server.

Note:

When the repository wasn’t yet initiated on the remote server,

The same button that now has the title Push origin had the title Publish repository.

- While committing changes to source control for version management and backup is the basic usage of Git, there are many more source control operations that can be performed, like reverting to past commits (states) of the repository, opening new branches for to manage different version of the project, merging branches etc. to name just a few examples. these operations are beyond the scope of this article, and I strongly recommend to get to know them and more.

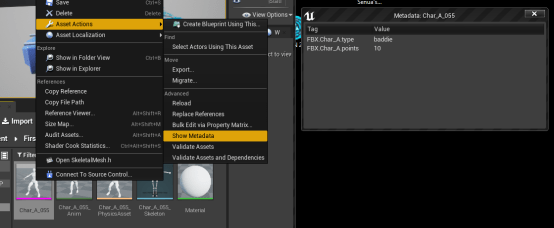

Unreal Editor Git Plugin:

There is a free, open source

Git client plugin for the Unreal Editor developed by Sébastien Rombauts, that ships (at beta stage) included with

UE4 4.24, and has many useful features integrated into the

Unreal Editor like initiating the project repository with

Git LFS setting, committing project states directly from the within the Editor, comparing versions of blueprints and more.

I did some tests with the plugin and found it very convenient, however It doesn’t track changes to the projects C++ code so if your coding you’ll have to commit code changes using a different Git client.

It may be that I just didn’t understand how use the plugin or set it up to track C++ code. I didn’t to find out if it officially doesn’t support this. however, if you work heavily with Blueprints It should be very useful.

Some issues I encountered:

Note:

I don’t know if these issues happen frequently, the reason may very well be me not doing things correctly.

There may be simple solutions to these issues that I don’t know about..

What’s absolutely certain is:

When you’s about to start your first steps with source control on a real project, make sure you back it up first!

Corrupted .uproject file:

After the first time I managed to get all the LFS setting right and successfuly push publish the project, strangely, it wouldn’t launch in the UE Editor, displaying the following message when I tried to double click the project .uproject file:

And displaying the following message when I tried to launch it through the Epic launcher:

After some inquiry I found out (to my astonishment) that some how the git operations have replaced the text contents of the .uproject file from this:

To this:

Luckily for me I had a full backup of the whole project before starting the setup trial and error process, so I could manually restore the .uproject file to it’s correct state and go on working.

Corruption during download from GitHub:

After a couple of successful commit and push operations with my project, I decided to test how a git repository works as backup, so I tried to download the project folder compressed to a zip file.

The extracted project would launch and compile, but fail to load the main (and only) level it had, displaying this message:

Looking into this, I found that the level umap file is was actually in it’s place but drastically reduced in size:

* The left is the original

The good news:

I then tried to clone the project via git clone command, and that way it did work as expected.

That’s it!

I hope you’ll find this article useful or even time/error saving.

If you find anything unclear, inaccurate or missing, I’ll be grateful if you leave a comment.

Happy Unrealing and Source Controlling! 🙂