In theory, all clear* refractive surfaces should have their shadow calculated using a refractive caustics calculation in-order to render the refractive lensing** effect correctly, have their transparency color calculated as volumetric absorption of light through the medium in-order to render the color correctly for areas of different thickness, and have not only external reflections, but also internal reflections calculated, in-order to render the interaction between light and the transparent body correctly.

However, for thin surfaces of even thickness, like window glazing and car windshields, these optical effects can be rendered in much cheaper (non physical) methods, with very little compromise on final image quality or look, and even have an easier setup in most cases.

For this reason most popular render engines have object (mesh) and material (shader) parameters that allow configuration of the way these transparency effects will be rendered.

In this short article we’ll cover the different methods for rendering transparency effects, the reasoning behind them and the way to configure these settings in different render-engines.

In the comparison images below (rendered with Cycles), the images on the left were rendered with physically correct glass settings, 8192 samples + denoising,

And the images on the right were rendered with “flat” transparency settings and 1024 samples + denoising.

> See the shader settings below

Note that while for the monkey statue, the fast flat transparency settings produce an unrealistic result, the window glazing model loses very little of its look with the flat fast settings:

Lensing, caustics and transparent shadows:

It’s a common intuitive mistake, that transparent objects don’t cast shadows, but they actually do. they don’t block light, they change its direction. light is refracted through them, gets focused in some areas of their surroundings (caustics) but can’t pass through them directly, so a shadow is created.

A good example of this would be a glass ball, acting like a lens, focusing the light into a tiny area, and otherwise having a regular elliptical shadow. if we tell the render-engine to just let direct light pass through the object we won’t get a correct realistic result, even if the light gets colored by the object’s transparency color.

There is however one case where letting the direct light simply pass through the object can both look correct and save a lot of calculations, and that is when the object is a thin surface with consistent thickness like window glazing.

So in many popular render-engines, when rendering an irregular thick solid transparent body like a glass statue or a glass filled with liquid, we have to counter-intuitively set the object or material to be opaque for direct light and let the indirect refracted light (caustics) create the correct lensing effect (focused light patterns in the shadow area)

> physically, light passing through a material medium is always refracted, i.e. indirect light. but for thin surfaces with even thickness like glazing, the lensing effect is insignificant, and can be completely disregarded by letting light pass directly through the object and be rendered as ‘transparent shadow’.

So the general rule regarding calculating caustics (lensing) vs casting transparent shadows (non physical), is that if the transparent object is a solid irregular shape with varying thickness like a statue or a bottle of liquid it should be rendered as opaque for direct light but with fully calculated caustics i.e. refracted indirect light.

Transparency color:

Physically, the color of transparency*** is always created by volumetric absorption of light traveling within the material medium. as light travels further through a material, more and more of it’s energy gets absorbed in the medium**** (converted to heat), therefore the thicker the object, less light will reach its other side, and it will appear darker. this volumetric absorption of light isn’t consistent for all wave lengths (colors) of light so the object appears to have a color.

For example, common glass, absorbs the red and blue light at a higher rate than green light, and therefore objects seen through it will appear greenish. when we look at the thin side of a common glazing surface we see a darker green color because we see light that has traveled through more glass (through a thicker volume of glass) because of refraction bending the light into the length of the surface. tea, in a glass, generally looks dark orange-brown, but if spilled on the floor it will ‘lose’ its color, and look clear like water because spilled on the floor, it’s too thin to absorb a significant amount of light and appear to have a color.

Most render engines allow setting the transparency (“refraction”/”transmission”) color of the material both as a ‘flat’ non physical filter color, and as a physical RGB light absorption rate (sometimes referred to as ‘fog’ color), that can in some cases be more accurately tuned by additional multiplier or depth parameters.

Setting an object’s transparency color using physical absorption (fog) usually requires more tweaking because in this method, the final rendered color is dependent not only on the color we set at the material/shader, but also on the model’s actual real world thickness.*****

In general, the transparency color of thick, solid, irregularly shaped objects (with varying thickness) must be set as a physical absorption rate color, and not as a simple filter color, otherwise the resulting color will not be affected by the material thickness, and look wrong.

For thin surfaces with consistent thickness, like window glazing, however, it’s more efficient to setup the transparency color as a ‘flat’ filter color, because it’s more convenient and predictable to setup, and produced a correct looking result.

For example, if we need to render an Architectural glazing surface that will filter exactly 50 percent of the light passing through it, it’s much simpler to set it up using a simple 50% grey transparency filter color, because this method disregards the glass model’s thickness. This approach isn’t physical, but for an evenly thick glazing surface, the result has no apparent difference from a physical volumetric absorption approach to the same task.

Internal reflections:

It’s not intuitive to think that the air surface itself has reflections when seen through a transparent material volume like water or glass.

Viewed from under water, the air surface above, acts like a mirror for certain angles, reflecting objects that are under water. a glass ball lit by a lamp has a very distinct highlight, which is the reflected image of the light source itself (specular reflection), but it also has an internal highlight appearing on inside where the glass volume meets the air volume. we can easily ‘miss’ this internal highlight because in many cases it’s appearance converges with the bright focused light behind the ball, caused lensing (refractive caustics). the distinctly shiny appearance of diamonds, for example, is very much dependent on bright internal reflections, diamond cutting patterns are specifically designed to reflect a large percentage of light back to the viewer and look shiny, and if we wish to create a realistic rendering of diamonds, we will not only have to setup the correct refractive index for the material, but also model the geometric shape of the diamond correctly, and of course, set the material to render both external (“regular”******) reflections and internal reflections.

Your probably already guessing what I’m about to say next..

For thin surfaces with even thickness, the internal reflection is barely noticeable, because it converges with the main surface reflection, an for this reason, when rendering window glazing, car windshields, and the like, we can usually turn the internal reflections calculation off to save render time.

Render Settings:

Simplified settings summary table:

|

Flat (Glazing) |

Physical (irregular volume) |

| Shadow |

Transparent |

Caustics |

| Color |

Filter |

Volumetric Absorption |

| Reflections |

External only |

External and Internal |

Example Cycles (Blender) shaders:

> The Flat glazing shader is actually more complex to define since it involves defining different types of calculations per different type of rays being traced (cheating).

In general, for Shadow and Diffuse rays that shader is calculated as a simple Transparent shader and nor a refraction shader, and when back-facing, the shader is calculated as pure white transparent instead of glossy to remove the internal reflections.

> While the flat glazing shader is only connected to the Surface input of the material output, the physical glass shader has also a Volume Absorption BSDf node connected to the Volume input of the material output node.

> Note that a simple Principled BSDF material will have flat transparency and physical shadow (caustics) by default.

> For caustics to be calculated, the Refractive Caustics option has to be enabled in the Light Path > Caustics settings in the Cycles render settings.

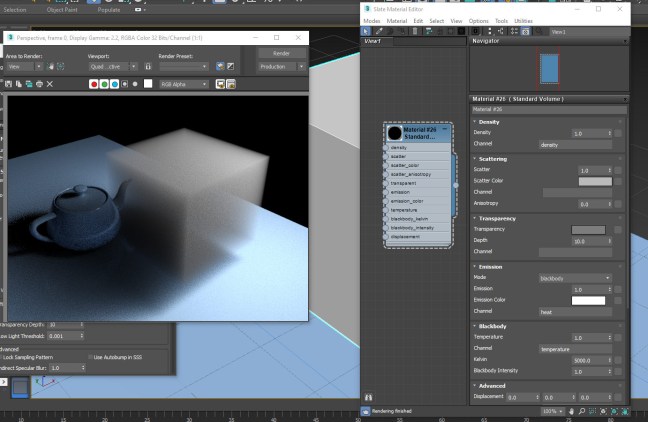

Example V-Ray Next for 3ds max material settings:

> In V-Ray for 3ds max (and Maya) the Affect Shadows parameter in the VrayMtl Refraction settings determines weather the shadows will be fake transparent shadows suitable for glazing or (on) or opaque (off) which is the suitable setting for caustics.

> The caustics calculation is either GI Caustics which is activated by default in the main GI settings or a dedicated Caustics calculation that can be activated, also in the GI settings.

> For flat glazing the color is defined as Refraction Color and for physical glass the Refraction color is pure white and the glass color is set as Fog color.

Example Arnold for Maya settings:

> In Arnold 5 for Maya the Opaque setting in the shape node Arnold attributes must be unchecked for transparent shadows, and checked for opaque shadows suitable for caustics.

> For rendering refractive caustics in Arnold for Maya more settings are needed.

> When the Transmission Depth attribute is set to 0 the Transmission Color will be rendered as flat filter color, and when the Transmission Depth attribute is a value higher than 0 the transparency color will be calculated as volumetric absorption reaching the Transmission Color at the specified depth.

General notes:

> in Brute Force Path Tracers like Cycles and Arnold the Caustics calculation is actually a Diffuse indirect light path. this seems un-intuitive, but the light pattern appearing on a table surface in the shadow of a transparent glass is actually part of the table surface’s diffuse reflection phenomenon.

> what we refer to as ‘Diffuse Color’ in dielectric (non-metals) is actually a simplification of absorption of light scattered inside the object volume (SSS).

* Optically all dielectric materials (non-metals) are refractive, but not all of them are also clear, the is, most of them actually have micro particles or structures within their volume, that scatter and absorb light that travels through them, creating the effects we’re used to refer to as “Subsurface Scattering” (SSS) and in the higher densities “Diffuse reflection”.

** Lensing is a term used to describe the effect of a material medium bending light, focusing and dispersing it, and so acting as a lens.

*** Actually all color in dielectric (non metallic) materials is created by Volumetric Absorption.

**** Light isn’t only absorbed as it travels through medium, it’s also scattered.

***** Volumetric shading effects usually use the model original scale (the true mesh scale), so to avoid unexpected results it’s best that the object’s transform scale will be 1.0 (or 100% depending on program annotation)

Related Posts:

> Cycles Nested Transparencies

> Arnold for Maya Refractive Caustics

> Arnold for Maya Transmission Scattering

> Understanding Fresnel Reflections

> Advanced Architectural Glazing shader for Blender

> V-Ray Underwater Rendering